Sciences of Dub

Immersive Audio Formats

How to Read This Section

Each immersive format covered here approaches space differently.

These articles explain:

-

What each format is

-

How it works

-

Where it’s used

-

Why it matters

Taken together, they form a map — not a hierarchy — of how immersive sound is shaping the present and future of audio.

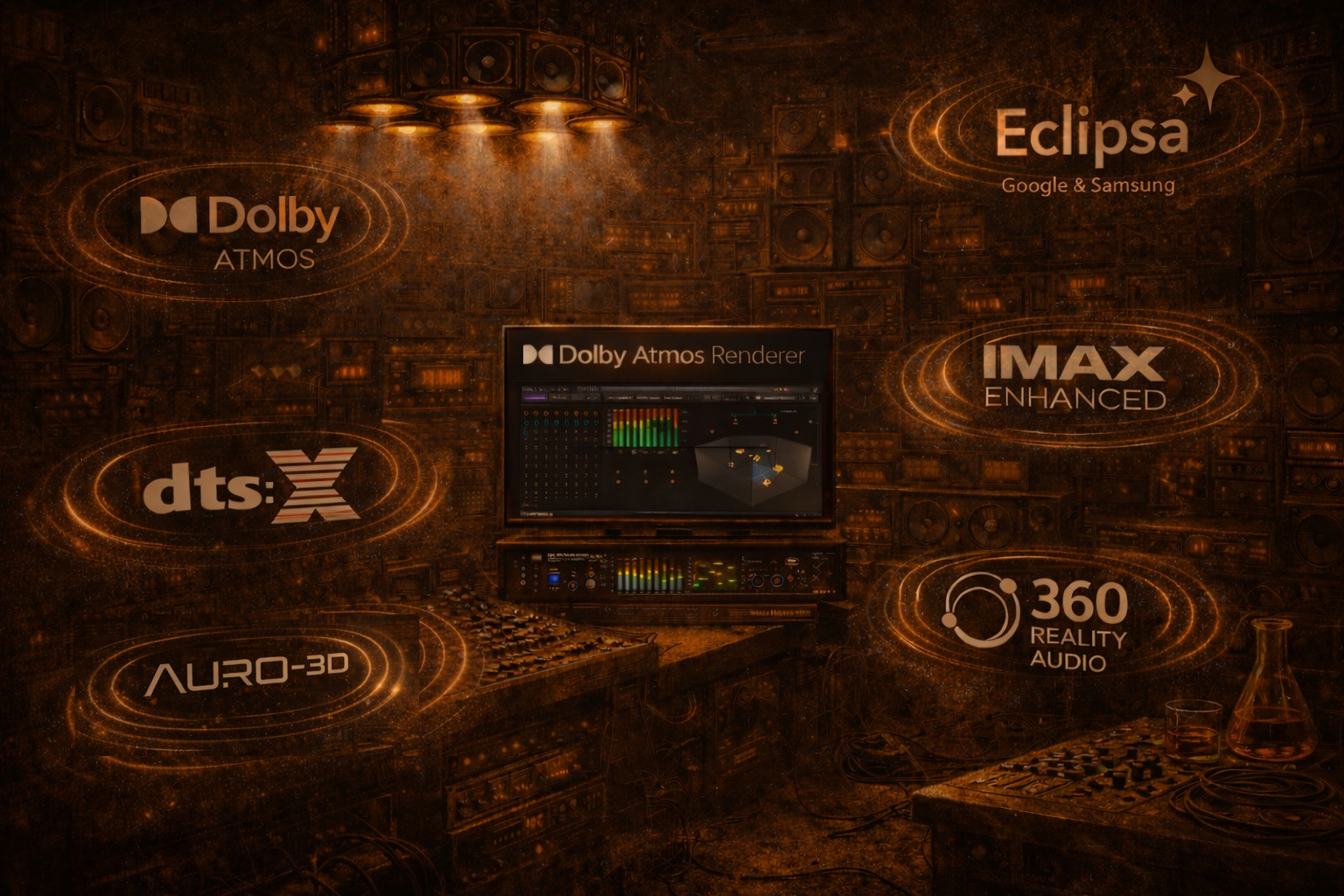

Immersive Audio Formats

Immersive audio formats are different ways of representing sound in three-dimensional space. Rather than limiting audio to left and right, immersive formats allow sound to exist around the listener, above them, behind them, and in motion, creating a sense of depth and physical presence.

These formats are not simply about adding more speakers or technical complexity. They represent a shift in how sound is conceived and organised — from fixed playback channels to space itself as a creative medium. Sound is no longer anchored to a single point in front of the listener, but can move, breathe, and interact with its environment.

Immersive audio reflects a broader change in how sound is created, delivered, and experienced today. As listening has moved toward headphones, streaming platforms, live installations, and interactive media, audio formats have evolved to adapt to these realities. The result is sound that feels less like reproduction and more like experience — placing the listener inside the music rather than in front of it.

From Channels to Space

For decades, audio was built around fixed channels:

-

Mono

-

Stereo

-

Surround sound

These systems worked well, but they assumed a fixed listening position and a fixed speaker layout.

Immersive audio formats move beyond this by describing space itself:

-

Where sound exists

-

How it moves

-

How it behaves in relation to the listener

Some formats focus on objects in space, others on soundfields, but all share a common goal:

to free sound from fixed speaker positions.

Why Immersive Audio Exists Now

Immersive audio didn’t arrive by accident. Several changes made it inevitable:

-

Headphones became the main listening environment

-

Computing power increased dramatically

-

Streaming replaced physical formats

-

Audiences became comfortable with 3D and interactive media

Immersive formats are designed to adapt — whether you’re listening on headphones, in a studio, in a cinema, or inside an installation. The same mix can exist across many playback systems without being rebuilt from scratch.

This adaptability is key to why immersive audio has become viable at scale.

Different Formats, Different Philosophies

Not all immersive formats think about space in the same way.

Some formats:

-

Treat sound as independent objects that move freely

-

Focus on music delivery and streaming

-

Prioritise compatibility and standardisation

Others:

-

Describe the entire soundfield

-

Are format-agnostic and flexible

-

Are often used in research, VR, and sound art

Rather than competing, these approaches often coexist within modern workflows, each suited to different creative and technical needs.

Immersive Audio Is Not a Genre

Immersive audio is not a style of music and not a replacement for stereo.

It is a way of organising sound in space.

A good immersive mix doesn’t draw attention to itself. It enhances:

-

Clarity

-

Depth

-

Movement

-

Emotional impact

When done well, immersive audio feels natural — not technical.

Why This Matters for Artists and Listeners

Immersive formats allow sound to become:

-

Physical

-

Architectural

-

Experiential

For artists, this opens new creative possibilities.

For listeners, it offers deeper engagement — especially in genres where space, bass, echo, and movement are already central to the music.

Immersive audio is not about novelty.

It’s about restoring space as a musical element.

Ambisonics

1. What Is Ambisonics?

Ambisonics is a way of capturing, representing, and reproducing sound as a full 3D sphere around a listener. Instead of mixing sound to speakers, Ambisonics describes the entire soundfield — front, back, sides, above, and below — as a mathematical model.

The key idea is that sound is stored independently of playback format. Whether the listener uses headphones, a small speaker array, or a large immersive installation, the same Ambisonic recording can be decoded appropriately.

In simple terms:

Ambisonics captures space itself, not a speaker mix.

2. First-Order vs Higher-Order Ambisonics Explained

Ambisonics comes in different spatial resolutions, known as orders.

-

First-Order Ambisonics (FOA)

Uses four channels to describe the soundfield. It’s efficient and widely supported, but spatial precision is limited. -

Higher-Order Ambisonics (HOA)

Uses many more channels, allowing for much finer spatial detail, sharper localisation, and more accurate movement.

Higher order doesn’t mean louder or more dramatic — it means clearer spatial definition. The higher the order, the more precisely sound can be placed and moved within the sphere.

3. How Ambisonics Is Used in VR, Games, and Sound Art

Ambisonics is especially powerful in environments where perspective changes.

Because the soundfield exists independently of speakers:

-

The listener can turn their head

-

Move through sound

-

Experience spatial changes dynamically

This makes Ambisonics ideal for:

-

VR and 360-degree video

-

Games and interactive media

-

Immersive sound installations

-

Experimental and spatial sound art

The sound doesn’t belong to speakers — it belongs to the environment.

4. Encoding, Decoding, and Playback Explained

Ambisonics works in two main stages:

-

Encoding

Sounds are captured or created and encoded into an Ambisonic format (often called B-format), which stores directional and spatial information. -

Decoding

That information is translated to:-

Headphones (binaural)

-

Multispeaker arrays

-

Custom immersive installations

-

The same Ambisonic recording can be decoded differently depending on the playback system, making it extremely flexible and future-proof.

5. Ambisonics vs Dolby Atmos: What’s the Difference?

Both formats are immersive, but they approach space differently.

-

Ambisonics

-

Soundfield-based

-

Format-agnostic

-

Common in research, VR, installations, and sound art

-

-

Dolby Atmos

-

Object-based

-

Industry-standardised

-

Widely used in music, film, and commercial delivery

-

Ambisonics describes how space behaves.

Atmos focuses on where individual sounds go.

In many modern immersive workflows, Ambisonics and Atmos are used together, not in opposition.

Dolby Atmos

1. What Is Dolby Atmos?

Dolby Atmos is an object-based immersive audio format that allows sound to exist and move freely in three-dimensional space — around the listener and above them, not just in front.

Traditional audio formats are built around fixed speaker channels such as left, right, centre, and surround. Dolby Atmos breaks away from this model by treating sounds as independent audio objects, each carrying information about what the sound is and where it should be positioned in space. This includes height, distance, and movement over time.

Instead of mixing sound to speakers, Dolby Atmos mixes sound into space. During playback, a system called a renderer calculates how to reproduce each sound based on the available setup — whether that’s a cinema with dozens of speakers, a studio system, a live immersive installation, or a pair of headphones.

This means the same Dolby Atmos mix can adapt intelligently to many different environments without needing to be remixed for each one. The creative intent remains intact, while the playback system handles the translation.

In practical terms, Dolby Atmos allows music, sound design, and storytelling to feel more physical, more spacious, and more immersive. Sound can hover, circle, approach, recede, or remain anchored — making space itself an expressive part of the work rather than a limitation.

2. Objects vs Channels: Why Dolby Atmos Is Different

To understand why Dolby Atmos is such a shift, it helps to compare channels and objects.

In traditional audio, sounds are assigned to fixed channels. A guitar might live in the left speaker, a vocal in the centre, a reverb in the surrounds. Once the mix is finished, those sounds are locked to those outputs.

Dolby Atmos replaces this approach with objects. An object is a sound paired with positional metadata — information describing where it exists in three-dimensional space and how it moves over time.

Because objects are not tied to specific speakers:

-

They can be repositioned dynamically

-

They scale across different systems

-

They remain consistent across formats

A renderer decides how to reproduce each object based on the playback environment. This makes Dolby Atmos flexible, scalable, and future-proof.

This shift from channels to objects is the core reason Atmos works across cinema, music, live sound, installations, and headphones using the same underlying mix.

3. How Music Is Mixed in Dolby Atmos

A Dolby Atmos music mix is typically built from a combination of beds and objects.

The bed (often 7.1.2) provides a stable foundation for core musical elements, while objects are used for precision, movement, and spatial detail. Vocals, instruments, effects, and ambience can all be treated as objects when appropriate.

In music, Dolby Atmos is most effective when used musically rather than theatrically. Instead of throwing sounds around for effect, producers often use Atmos to:

-

Create depth and separation

-

Open up dense arrangements

-

Allow reverbs and delays to breathe

-

Let space become part of the groove

Well-mixed Atmos music doesn’t feel gimmicky. It feels clearer, deeper, and more natural, especially in genres where space and texture play a central role.

4. Dolby Atmos for Headphones and Binaural Rendering

Although Dolby Atmos supports large speaker systems, most listeners experience it on headphones.

To make this work, Atmos uses binaural rendering — a process that simulates how sound reaches human ears in real space. This involves subtle timing differences, filtering, and spatial cues that allow the brain to perceive direction, height, and distance.

When done well:

-

Sounds feel external rather than inside the head

-

Height and movement are perceptible

-

The mix retains its spatial intent on simple stereo playback

No special headphones are required. The realism depends on the quality of the mix and the listener’s environment.

This headphone compatibility is one of the reasons Dolby Atmos has become widely adopted — immersive audio now fits how people actually listen to music today.

5. Dolby Atmos in Live Performance and Installations

Dolby Atmos is increasingly used beyond studios and streaming platforms.

In live performance and installation contexts, Atmos allows sound to:

-

Surround the audience

-

Move through physical space

-

Interact with architecture

Speakers can be arranged overhead, in-the-round, or distributed throughout a venue. The renderer adapts the mix to the system, allowing sound to feel embedded within the environment rather than projected from a single direction.

This approach opens up new creative possibilities for concerts, exhibitions, and immersive events — where sound becomes spatial, physical, and experiential, not just something you listen to from a distance.

- Set option values unique based on screen size

- Sidebar & modal editing layout options

- Visually intuitive graphical settings UI

- Front-end & back-end editors

- Reusable global sections

- Tree-list element view

Sony 360 Reality Audio

1. What Is Sony 360 Reality Audio?

Sony 360 Reality Audio is an object-based immersive audio format created specifically for music. Rather than placing sound across fixed left and right channels, it allows individual musical elements — vocals, instruments, rhythms, and effects — to be positioned freely within a full 360-degree sound field around the listener.

Each sound exists as an independent object with spatial information attached. This means music is no longer limited to a flat, forward-facing presentation. Instead, it can surround the listener, creating a sense of depth, width, and immersion while maintaining musical clarity and balance.

Sony 360 Reality Audio is designed to make the listener feel inside the performance, not observing it from a distance. The emphasis is on immersion that serves the music, rather than spectacle or cinematic effects.

2. How Sony 360 Reality Audio Positions Sound Around the Listener

In Sony 360 Reality Audio, every sound object includes metadata describing:

-

Its position in space

-

Its distance from the listener

-

Its relationship to other sounds

These objects are arranged within a virtual sphere surrounding the listener. During playback, the system calculates how each sound should be heard based on the listening environment — most commonly headphones.

Because sounds are not locked to specific speakers, the mix remains flexible. Instruments can sit beside, behind, or above the listener, while vocals or rhythmic elements can remain grounded and focused.

The result is a spatial image that feels coherent and musical, rather than exaggerated or disorienting.

3. Sony 360 Reality Audio vs Dolby Atmos for Music

Sony 360 Reality Audio and Dolby Atmos are both immersive formats, but they approach music differently.

Sony 360 Reality Audio is:

-

Designed primarily for music playback

-

Fully object-based, with no reliance on channel beds

-

Optimised for headphone listening and streaming platforms

Dolby Atmos:

-

Originated in cinema but has expanded into music

-

Uses a combination of beds and objects

-

Is designed to scale across cinemas, studios, live spaces, and headphones

In practice, Sony 360 Reality Audio often feels like a circular musical space around the listener, while Dolby Atmos can feel like placing the listener inside a room or environment.

Both formats coexist in modern workflows, offering different creative approaches rather than competing solutions.

4. How Binaural Playback Works in Sony 360 Reality Audio

Most listeners experience Sony 360 Reality Audio through headphones.

To achieve immersion over headphones, the format uses binaural rendering — a technique that simulates how sound arrives at human ears in real space. This includes subtle timing differences, filtering, and spatial cues that allow the brain to perceive direction, height, and distance.

The goal is to make sounds feel external, as if they exist around the listener rather than inside their head. No specialist headphones are required, though good playback systems and well-crafted mixes significantly enhance the experience.

This headphone-first design reflects how music is consumed today and is central to why Sony 360 Reality Audio works at scale.

5. Why Sony 360 Reality Audio Matters Today

Sony 360 Reality Audio fits naturally into modern listening habits.

It matters because:

-

Music listening is now largely headphone-based

-

Streaming platforms dominate music distribution

-

Audiences are open to immersive experiences without complex setups

By focusing on object-based music immersion that works seamlessly on headphones, Sony 360 Reality Audio lowers the barrier to immersive listening. Listeners don’t need specialist systems — they simply press play.

For artists and producers, the format offers a way to explore space, depth, and movement while keeping music central. For listeners, it offers a deeper, more engaging relationship with sound.

Eclipsa Audio

1. What Is Eclipsa Audio?

Eclipsa Audio is an open, next-generation immersive audio format and delivery framework developed by Google, designed to support spatial and immersive sound across a wide range of platforms without relying on closed, proprietary ecosystems.

At its core, Eclipsa Audio treats immersive sound as a fundamental part of modern digital media, not a specialist or premium add-on. It is built to work naturally with contemporary video, web, and streaming technologies, allowing immersive audio to be delivered at scale — across browsers, devices, and operating systems.

Unlike formats that were originally designed for cinema or specialist studio environments, Eclipsa Audio is shaped by how media is actually consumed today:

through online video, mobile devices, headphones, interactive content, and web-based platforms. Its design prioritises interoperability, flexibility, and accessibility, making immersive audio easier to implement for developers, platforms, and creators.

Eclipsa Audio supports object-based and spatial audio principles, allowing sounds to be positioned and moved within three-dimensional space. However, its defining characteristic is not a particular mixing style or listening format, but its commitment to openness. By aligning with open media standards, Eclipsa Audio aims to reduce technical barriers, licensing friction, and platform lock-in that can limit the spread of immersive sound.

In simple terms, Eclipsa Audio represents a shift away from immersive audio as a niche or branded experience, and toward immersive sound as a baseline capability of digital media — something that can live naturally on the web, scale globally, and evolve alongside future technologies.

2. What Does Eclipsa Audio Bring to the Table?

What Eclipsa Audio brings is a fundamentally different set of priorities to immersive audio.

Where many immersive formats were designed around premium environments — cinemas, studios, or tightly controlled playback systems — Eclipsa Audio is designed around reach and adaptability. Its focus is not on a single ideal listening setup, but on ensuring immersive sound can travel across platforms, devices, and delivery methods with minimal friction.

This includes:

-

Compatibility with modern web and streaming technologies

-

Alignment with open media standards

-

Reduced dependence on proprietary toolchains or licensing

In practice, this makes immersive audio more accessible to developers, independent creators, and platforms that want spatial sound without being locked into closed ecosystems. Eclipsa Audio prioritises infrastructure over branding, enabling immersive sound to function as a practical, scalable medium rather than a specialist feature.

3. How Eclipsa Audio Approaches Immersive Sound

Eclipsa Audio is built around spatial and object-based audio principles, allowing sounds to exist as elements that can be positioned and moved within three-dimensional space.

Rather than assuming a fixed speaker layout or listening context, it is designed to adapt dynamically to different playback environments. These may include:

-

Headphones

-

Mobile devices

-

Consumer speaker systems

-

Browser-based playback

-

Multi-speaker installations

The emphasis is on translation rather than optimisation — ensuring that immersive intent survives as audio moves between environments. This makes Eclipsa Audio particularly suitable for interactive media, online video, and future-facing content where the listening context cannot be tightly controlled.

In this sense, Eclipsa Audio treats immersive sound as data-rich and flexible, rather than tied to a single ideal presentation.

4. How Eclipsa Audio Fits Into the Wider Immersive Ecosystem

Eclipsa Audio is not designed to replace established immersive formats such as Dolby Atmos, Sony 360 Reality Audio, or Ambisonics.

Instead, it occupies a complementary role within the immersive ecosystem:

-

Dolby Atmos excels in cinema, premium music delivery, and large-scale installations

-

Sony 360 Reality Audio focuses on music-first immersive streaming

-

Ambisonics thrives in research, VR, and sound art

Eclipsa Audio sits alongside these as an open, platform-agnostic layer, particularly well suited to the web, interactive media, and scalable digital distribution.

Its importance lies less in how immersive sound is mixed, and more in how immersive sound is delivered and sustained across platforms over time.

5. Why Eclipsa Audio Matters Going Forward

Eclipsa Audio matters because immersive audio is moving into everyday digital spaces.

As spatial sound becomes part of:

-

Online video

-

Web-based storytelling

-

Interactive and participatory media

-

Education and creative platforms

There is a growing need for immersive formats that are open, adaptable, and future-proof.

Eclipsa Audio reflects a shift toward immersive sound as a baseline expectation, not a premium or specialist experience. It supports a future where spatial audio is as normal and widespread as stereo once became — embedded into the fabric of digital media rather than confined to specific industries or environments.

Immersive audio formats are constantly evolving. As new technologies emerge and existing formats change, this article will grow with them.

We’ll continue to update and expand this section to reflect new developments, new ways of working, and new ways of listening — so it’s always worth coming back to see what’s been added.